I've Completely Changed How I Work

Sharing the motivation, approaches, and what it means for the future of staff engineering

This is a big reveal post, folks. I’ve completely changed how I show up and deliver work, and the radical transformation has been the last 90 days or so. I’m going to use this post to convince you to do the same and to show you how to do it if you aren’t already.

I assume you are using Cursor, Claude Code, or Codex for this post.

Solely focusing on a “solve by default” mindset leads to greater impact, pushes us to think creatively about how to scale/automate, and results in a deep sense of accomplishment I can’t put into words.

We’ve talked a lot about solving, and as staff+ engineers, we solve all the time. Depending on the role, that can be solving work directly (e.g. code contribution), indirectly (e.g. influence, connecting the dots), or strategically (e.g., tech leading a project, setting strategy bets). Often it’s some combination of all the above. I’ve noticed that for me, the part of solving that takes the longest is “finding exectors”. It’s not building a tech strategy or actually executing; once the work is owned, work can usually happen quickly.

Execution often requires working through planning processes, often with teams juggling multiple priorities. These challenges can also manifest in simple things, such as filing a support ticket for a bug in a production app, or trying to get a pretty small feature on a team’s backlog. There is a pretty long asynchronous waiting game that can emerge, and depending on how busy the team is, what may take a day to deliver has to sit in a holding pattern for 6 to 12 weeks.

Maybe the team is open to contributions. I can spend a few days/week learning their codebase and opening a PR. Given that my background is in security engineering, not software engineering, I would lack confidence in my code contributions, and ultimately, it would take me longer to deliver a production-ready solution.

These challenges are “pre-December 2025 challenges” if you have been using Claude or Codex. That is because

AI Fundamentally Breaks That Pattern

The recent wave of models has completely floored the barrier of entry to executing end-to-end.

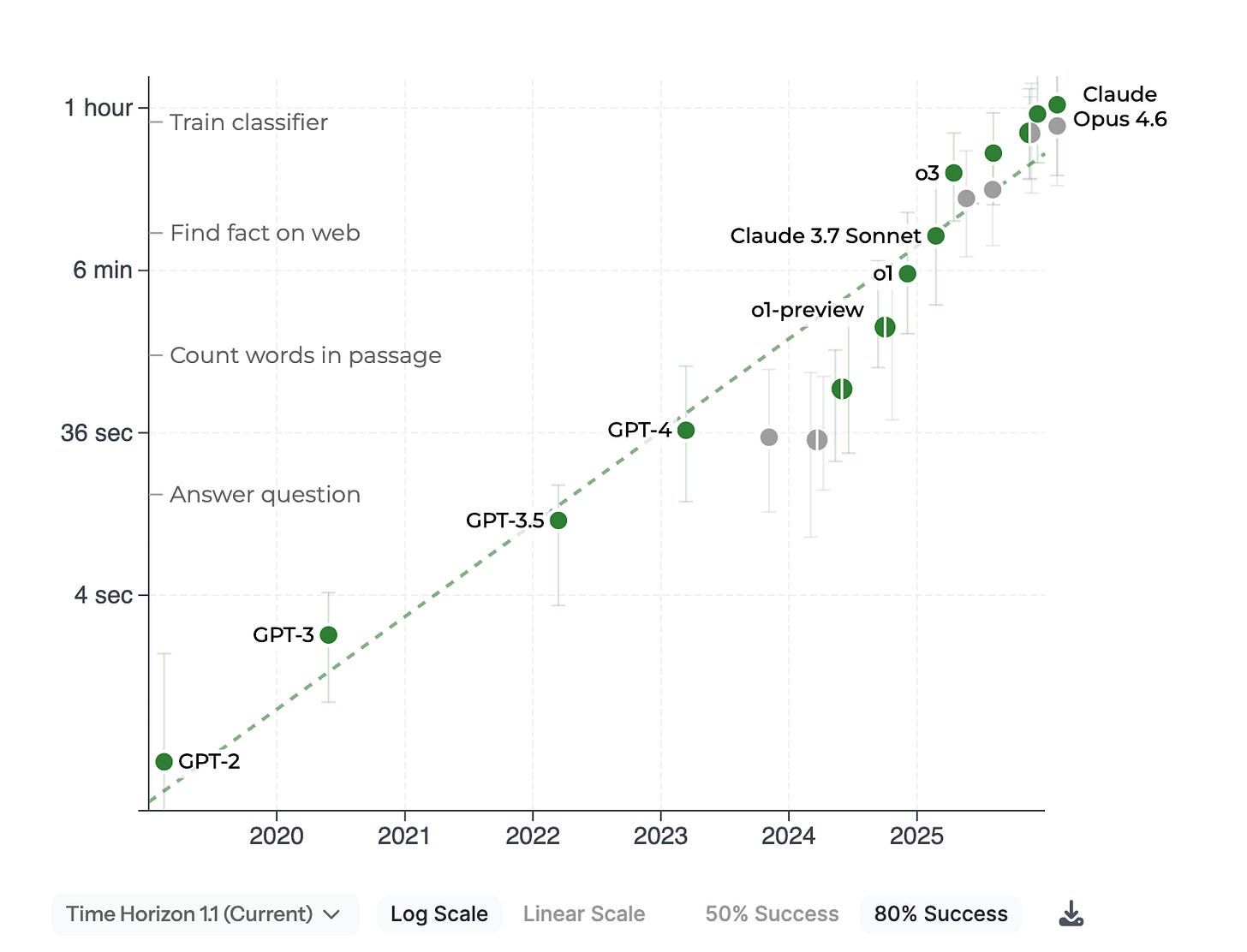

AI ability to complete long tasks (Source: https://metr.org/)

I’ve been playing around with building a user-friendly sandbox and noticed some GoLang services I was sandboxing didn’t support proxies. Instead of filing a bug ticket, I just SOLVED BY DEFAULT.

I cloned the repo, and had Claude understand the code base, its history, conventions, style preferences, and how it does testing

It built the fix, tested the softwater, ensured linters and formatters were clear, updated documentation, validated the completeness of my solution, and submitted a PR

I got comments on the PR, which the Claude agent worked through

The PR was accepted into main, total turnaround time was ~1 hour

In my old way of working, I would have filed a ticket, and the feature could have been delivered in weeks or longer, depending on the roadmap.

Solve By Default Applies Across Almost All Things

More than code, this includes operational tasks for me, like security assessments and metrics/dashboards.

Old Model

When I see something that looks security-related, I throw it on my backlog. I ping a few coworkers as “this may be interesting,” but they also have a pretty stacked plate.

When I need data, I fumble through notebooks and run a lot of failed queries. I ping a few of my data engineer friends for a hand, and within a few days/weeks or more, I’m able to get the data I need.

New Model

I immediately assess it with a tailored set of security agents. I always validate results and provide steering, but the ability to scale is unprecedented.

When I need data to tell a story, I have my AI agents pull it, ensure it’s highly consistent, and build dashboards or notebooks.

When I start on any operational task, I ask, Could AI do this faster, or with less toil? Is this something I’ll do again and could build an automation or workflow for?

And it’s deeply rewarding. Work gets done faster, folks see my vision clearly, and I can solve enough of a problem on my own to often bring others along to help me solve.

What does it mean to be Staff+ in the post-AI world?

I think for non-specialists, Staff+ engineers will be required to be this AI native. Staff+ engineers often have a breadth of skills that give them a competitive advantage in the AI world. Applied systems thinking, architecture experience, TPM/PM skillsets. The Staff+ engineer who adopts the practices I enumerate below sets themselves to solve more problems by default.

Best Practices / Framing / How to Apply

Human Time and Focus are the Most Valuable Assets in the AI-Native World - We still solve problems and provide context/direction that AI cannot, and our time therefore becomes the most valuable, since AI is already commoditizing so many problems we used to have to hold and solve. Knowing what you uniquely provide and spending most of your time there, while offloading everything else to AI sets you up for maximum impact. Not knowing what you uniquely provide sets you up to be replaced by AI.

Start using Agents daily - Not much to elaborate here, you should be using AI agents daily (so as to develop the skill/muscle needed to be effective with them). I still see some folks skeptical because they had a bad PR or breaking change, but haven’t worked through the possibility it was how they prompted the Agent.

No problem is off the table - When I have an idea, I turn to AI and say, is this possible? We brainstorm and spin up prototypes. Some of them fail, and that’s a useful learning. Some problems I can see a clearer solution to with a prototype, allowing me to either build on it or use it to convince a partner team to try to solve it.

Turn meetings into tangible products - I have an AI notetaker in each meeting, which has some prompts I can use to quickly turn a discussion about a strategy, risk, or project into PRD requirements. When working on strategy bets, my AI agents help me capture them so we can turn them around.

Turn memos into implementations - When I think of defaulting to writing something down, or someone shares a strategy doc, problem statement, idea, etc., I immediately ask Claude, “Let's take the relevant part of this and turn it into an implementation.”

Automate Like Crazy - Every product that eats up some of your time probably has native AI integration or opportunities for automation. This can range from creating spreadsheets to managing an inbox to keeping track of your work/accomplishments to writing your quarterly plan. Automation doesn’t have to be end-to-end; a Google AppScript may be sufficient or a runbook. Each minute we automate saves us time to focus on what’s most useful.

I’m a victim of the automator's trap (e.g., spending a week automating a thing that saves me 10 mins a year), so be careful about what makes sense to automate.Try to have 2+ tasks - When I’m working with multiple agents, I like to have 2 discrete tasks minimum going. This allows me to swap between tasks for guidance or human-in-the-loop actions that are needed and not have much idle time. Some practitioners pride themselves on having dozens or more discrete tasks. I don’t, but if you do, by all means scale as much as you like.

Validate important work - Eventually, validation will be fully offloaded ot LLMs (disagree? let me know why in the comments). You can build automated/deterministic validations with code linters, unit/integration tests, and test evidence (Playwright screenshots, packet captures ). LLMs as a judge can do good validation work. But there may be critical pieces of your work that for now REQUIRE human validation (e.g. setting requirements, validating end state). Furthermore, I encourage you to look at the ‘big picture’ of the project or operation. LLMs that are working on a single feature aren’t thinking about the macro architecture choices you may want them to make.

Set up your day for building or operating with low cognitive load - I have structured my days to have long focus blocks near the end of the day for “building requirements” and mornings for “validating work”. I have been super batching meetings (moving most 1:1s to Tue/Thur every other week), so I have longer full days for problem-solving.

Share Wins - If you do all this and tell no one, how do we all level up? I feel like some of this way of working is ‘bleeding edge knowledge’ that NEEDS to be shared. A huge part of your job as a staff+ engineer should be leveling everyone up! I have been doing a lot more talks, sharing resources with coworkers, a few pairing sessions, and just sharing my wins/fails and passion to spark it in others.

There are caveats. Some areas are really complex and can’t be solved by default, the way I’m describing. Really complex work, especially cross-functional and multidisciplinary, requires a lot of human/cognitive attention. GenAI writing is still ‘one voice’, even with good prompting, it doesn’t have the human creativity and imperfection we seek when reading an author’s work. It misses things and requires supervision. You will lose a day or two when a workflow or dev cycle goes wrong. In the grand scheme of things, I think all of these can be managed and are worth the tradeoff.

The tradeoff, to me, feels small because my impact is showing up sooner and more tangibly. This is still while working as an L8, where our work often spans quarters/years and is very broad/cross-cutting. Even on the broadest, most strategic work, I’m able to develop clearer strategies with AI assistance, often through assessments or prototypes.

I’ve never felt more energized in my entire career, and I hope that if you are a skeptic, you’d read this post and be open to trying it out!

Band Practice - Solve By Default Next week

At some point next week, when you’d default to “writing a memo”, “filing a ticket in another teams” backlog, etc., ask your AI Agent, let’s aim to solve this. Brainstorm with the agent, then actually solve it.

Do this with a coworker on your team, and share how it went with them (wins, fails, learnings).

S.B.